Humans steer, agents execute

The operator should set the target, the invariants, and the approval rails. Agents should own the execution instead of asking to be babysat every few minutes.

Daniel G. Wilson / Founder / Legion Health

San Francisco, California

I build AI systems for high-stakes environments: control planes, approvals, evaluation harnesses, and many-agent workflows that survive messy reality instead of demoing well.

01 / Thesis

Reliable AI is not a model-selection problem. The model matters, but production truth is usually decided by the harness around it, the environment it has to survive in, and whether many agents can operate in parallel without colliding or hallucinating authority.

The operator should set the target, the invariants, and the approval rails. Agents should own the execution instead of asking to be babysat every few minutes.

Throughput comes from parallelism, isolated worktrees, and accepting variance. The important number is not agent count for its own sake, but how many real capabilities can run safely at once.

In software, the clean handoff is a pull request with evidence. Humans should review the artifact at the end, not sit in the loop mid-flight unless the system truly needs intervention.

Dangerous autonomy only works when the environment is shaped first: ceilings, sandboxing, spend limits, explicit tools, and replayable evidence. Then you can let agents move.

The bottleneck is not code generation. The bottleneck is whether you can tell what is correct, what failed, and what should happen next without turning every run into a manual investigation.

02 / Selected Work

The throughline is simple: software that has to do real work in the world, under real constraints, with enough clarity that people can trust it. That includes the development workflow itself: humans set direction, many agents operate in parallel, and the system has to stay legible.

/ current system

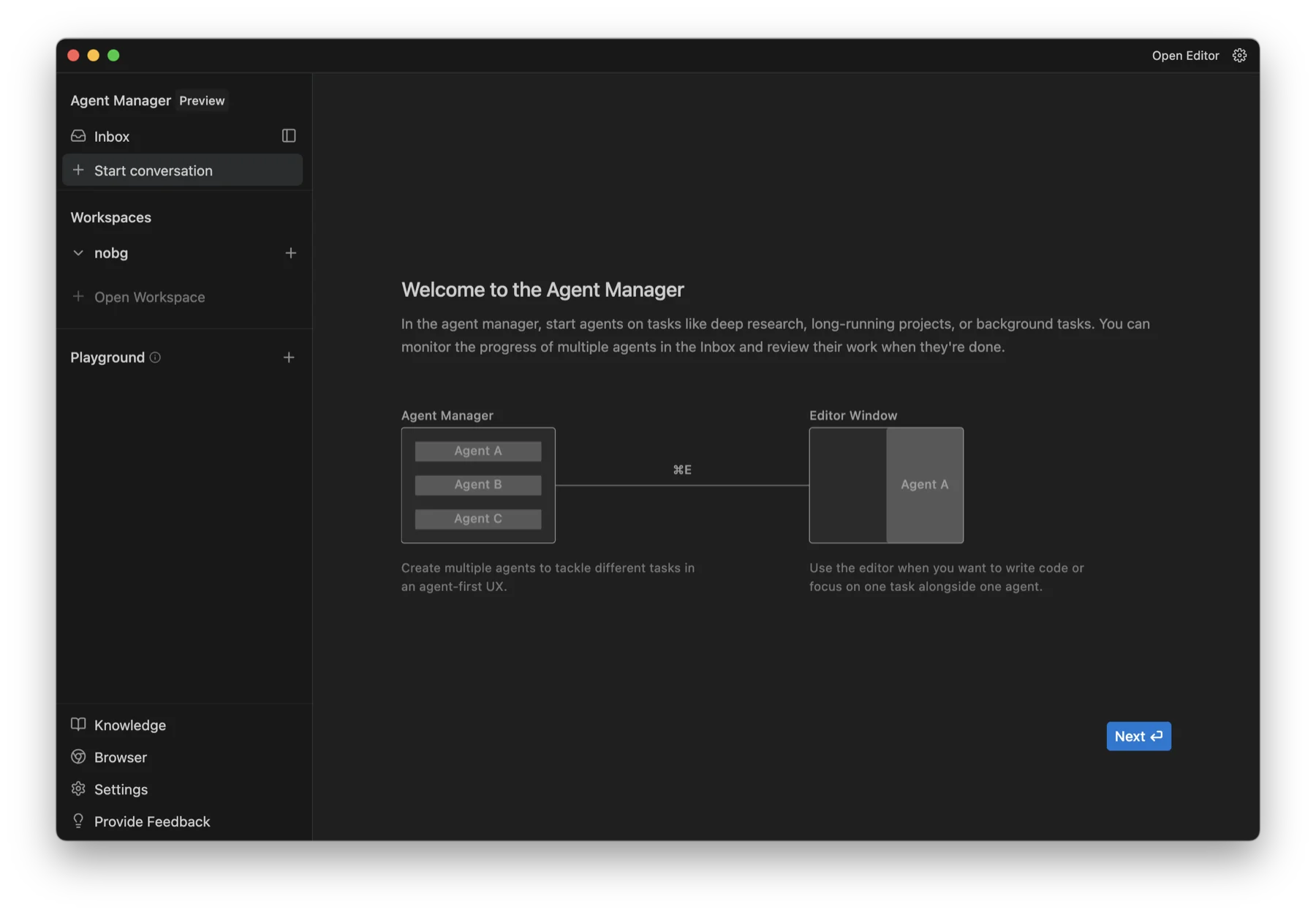

The interesting part is not chat. It is giving one operator a clean way to launch parallel agents, inspect progress, and keep the system legible while real work is moving.

Parallel agents

The UI is designed around multiple active agents, not one assistant taking turns in a single conversation.

Workspaces

Separate workspaces and bounded responsibilities are not implementation details. They are what make parallel agent execution sane.

Evidence

The operator should be able to see what changed, what completed, and what needs review without decoding a wall of intermediary chatter.

Co-founder

Building AI-native psychiatric operations with staged autonomy, approval rails, multi-agent workflows, and regulator-grade evidence.

2018-2020Product manager

Learned what coordination debt looks like inside a giant organization and why explicit systems beat alignment theater.

Before and alongsideBuilder

Launches, iOS apps, motion work, and creative tools that sharpened product taste and shipping instincts.

03 / Proof

Enough proof to establish credibility, including a live-ish GitHub trace, without turning the homepage into a profile dump.

/ GitHub activity

Roughly live from GitHub. The point is not streak theater. Real systems work should leave visible traces in code, reviews, and iteration.

Refreshed a few times a day.